The adventures continue! I run a number of computers at home, almost entirely GNU/Linux-based. As the hardware continues to age, data integrity had become more and more of a concern. I am particularly worried about sudden disk failures, and with a combination of ddrescue and timeshift I have been setting […]

In October 2023, I had the great pleasure to journey from Sudbury to Modane, France to participate in a workshop at the Modane Underground Laboratory (Laboratoire Souterrain de Modane, or LSM). That was an incredible work period and a great chance to visit the underground laboratory, which is a “horizontal […]

Join me in this continuing series of posts that explores the development of the material for my public lecture on Thursday, March 14, entitled “Catch a Dying Star: Astronomy Deep Underground”. Today, I explore the development of the storyline.

Join me for a series of posts that explores the development of the material for my public lecture on Thursday, March 14, entitled “Catch a Dying Star: Astronomy Deep Underground”. Today, I explore the origin of the title and the material that is the rough foundation of the lecture.

Updated October 28, 2024: I added information about the new companion website for the book! We’ve launched! While the official release date of the book is October 31 (aka Dark Matter Day … aka Halloween!), it is now available to order. Copies should start arriving on or about October 31 […]

I have decided to make a commitment to cover all three of the basic science Nobel Prizes this year. Next up is the Chemistry Prize! The prize announcement will begin not earlier than 05:45am Eastern Time (11:45am CEST) on Wednesday, October 9. I’ll be up with coffee in-hand to watch […]

I have decided to make a commitment to cover all three of the basic science Nobel Prizes this year. Next up is the Physics Prize! The prize announcement will begin not earlier than 05:45am Eastern Time (11:45am CEST) on Tuesday, October 8. I’ll be up with coffee in-hand to watch […]

Live updates are at the bottom of this post. (Scroll down to see them) I have decided to make a commitment to cover all three of the basic science Nobel Prizes this year. I will confess: I know the limits of my knowledge and the prize for “Physiology or Medicine” […]

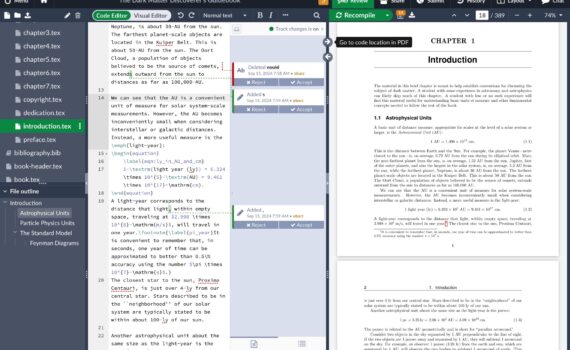

Jodi and I were very fortunate to be able to get a lot of feedback on our book draft for “The Dark Matter Discoverer’s Guidebook”. We finished receiving all the feedback a few weeks ago. This was the scariest part of the writing process. You spend so much time with […]

Today was a wonderful day. The host site for SNOLAB, the Creighton Mine, ran its Family Day events this afternoon. Food, a tour of mine equipment, games, music … and science! SNOLAB was part of the festivities, and the lab came together to share wonder and discovery with the mothers, […]

Three years in the making, “The Dark Matter Discoverer’s Guidebook” – a labour of love and intellectual pursuit – is almost ready for publication. The goal is to have this published and available on or around Dark Matter Day (October 31). This book was born from a pandemic delay, a […]

I recently had the humbling experience of officiating the second wedding of my life. The first was my sister’s wedding. The second, just a week ago, was that of my most recent PhD student, Chris. This one was, however, unique: I had to do some writing for the ceremony. Apparently, […]