Tomorrow I will board a plane at the Sudbury airport and, connecting through Toronto, depart for Fredericton, New Brunswick. I am excited about this for at least a two reasons. First, this coming week is the Canadian Association of Physicists (CAP) annual Congress. The week-long meeting will bring students, post-docs, […]

It has been a long time since I was crammed in a big unpleasant crowd of people. Let’s take stock of the last three years. My last major international trip was at the very beginning of March 2020. Outbound from Dallas to CERN, the planes were semi-normal (more masking than […]

After two negative COVID-19 antigen tests this past week, I returned to in-person work. I kept myself masked in the office, especially when I was around anyone else. When alone in my own office, or eating in a more open public area, I lost the mask (unless someone showed up […]

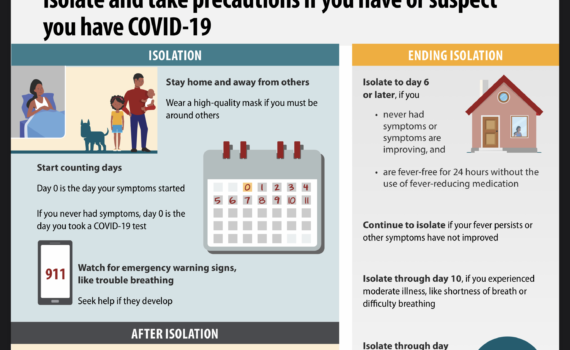

The CDC recommends calling the first day you present symptoms as “Day Zero”.“Day One” is the first full day over which you experience symptoms from a SARS-CoV-2 infection. By that counting, I am on day 8, the 8th full day of symptoms. What is impressive to me is the staying […]

In the spirit of deepening the open federated social web, this blog is now powered by ActivityPub, the open federated social standard. This is thanks to the WordPress plugin “activitypub”. You can follow this blog using the webfinger @steve. Or not. It’s cool. Whatever. 🙂

The World Health Organization declared SARS-CoV-2 a global pandemic on 11 March 2020. At the time, I was isolating at home after returning from CERN. I was not allowed on campus because I had been abroad when my university declared that all international travel for work purposes was ended. But […]

Mastodon has been around for a while, but garnered interest recently in the wake of Twitter’s leadership-driven meltdown. I tried setting up a Mastodon instance over a year ago but abandoned the effort due to time pressure. My interest in spinning up an instance was rekindled by recent events coupled […]

We had quite a storm last night, with some power outages from high winds, ice, and snow. But everything has been quite driveable this morning (although the school system cancelled buses today). Here is the view from Highway 144 on the way into SNOLAB.

I hate moving. The fact that I am so happy after the big relocation from Texas to Canada tells you how great Sudbury and SNOLAB have been. Moves irritate me because it takes so long to recover from them. You unpack for what feels like (or actually are) years. I […]

Happy new year, everyone. The calendar says it is 2023. From the perspective of our orbit about the Sun – the solar year – we are still several hours from that mark. Earth takes 365.25 Gregorian calendar days to make one orbit about the Sun. As a result, every fourth […]

It was October in the void. The white dwarf, Nain Blanc, had been, for some time, slurping up the hydrogen and helium from the vast gas envelope of its partner, a red giant, Géant Rouge. When they were younger, the two stars burned bright in the dark of empty space, […]

The pastor was prayin’For sinners unpracticed,Preparin’ communion(Except for us Baptists) And I’m in the second pew ready for healin’When a voice next to me says “We should be wheelin’;The ERs the place that I want to beOn Christmas Eve, o brother, why can’t you see?” We’ll go to the ERAnd […]